What is the difference between FPGA and GPU

Difference Between FPGA and GPU

FPGA (field programmable gate array) and GPU (graphics processing unit) are two common hardware accelerators used to increase computing and processing speed. Although they overlap in many ways, there are many differences in architecture, design, and application. In this article, we will explore the differences between FPGAs and GPUs in detail.

FPGA Architecture:

FPGA is a programmable logic device, which consists of a series of programmable logic units (LOOKUP tables and registers) and is connected through a programmable interconnection network. This makes FPGA highly flexible and reconfigurable, enabling a wide range of applications. Logic cells in an FPGA can be reprogrammed as needed, allowing flexibility to adapt as application requirements change.

GPU is a chip for parallel computing. Its core is an array of parallel computing units composed of a large number of processing units and memory. GPUs are designed for graphics rendering and processing, but are also widely used in data parallel computing. The architecture of GPUs makes them ideal for processing massively parallel tasks such as image processing, machine learning, and scientific computing.

FPGA Design method:

The design of FPGA is carried out through a hardware description language (HDL), such as VHDL or Verilog. Users need to write code according to the requirements of the application and implement the required logic circuits on the FPGA. Then, special development tools are used to convert the HDL code into a configuration bitstream (bitstream) on the FPGA to configure the required logic circuits on the FPGA.

GPUs are designed through graphics APIs such as OpenGL or DirectX. Applications are typically written in a shader language (such as the OpenGL Shader Language or CUDA) and then use a compiler to convert it into instructions that the GPU can understand. These instructions can be sent to the GPU as part of the graphics API to perform tasks.

FPGA Flexibility and performance:

FPGA is a reconfigurable hardware that allows users to redesign circuits based on application changes. This flexibility makes FPGAs ideal for rapid prototyping and custom applications. Although FPGAs may reach the performance level of GPUs on some specific tasks, the performance of FPGAs is generally lower relative to GPUs.

The GPU is designed specifically to handle large-scale parallel tasks. Its hardware and software optimization enable it to have excellent performance in fields such as graphics rendering, deep learning, and scientific computing. Compared to FPGAs, GPUs generally have higher computing performance and throughput. However, due to the domain-specific design of GPU, its flexibility is relatively low.

FPGA Energy consumption and power consumption:

FPGAs typically have lower energy and power consumption than GPUs under the same workload. This is because the logic cells in the FPGA can run at lower clock speeds, thus reducing power consumption. In addition, the FPGA's reprogramming capabilities allow it to be optimized based on the needs of a specific application, further reducing energy consumption.

The high computing performance of GPUs is usually accompanied by higher power consumption. Due to the large number of parallel computing units and high clock speed requirements, GPUs generally require more energy for the same workload.

FPGA Application areas:

FPGA is mainly used in fields that require low latency, high parallelism and high reconfigurability. For example, communications, digital signal processing, embedded systems, and cryptographic algorithms. FPGAs are also widely used for rapid prototyping and acceleration of domain-specific applications.

GPUs are mainly used in the fields of graphics rendering, game development, computer vision, machine learning and scientific computing. Due to its high parallel computing capabilities and low cost, GPU has been widely used in the field of deep learning.

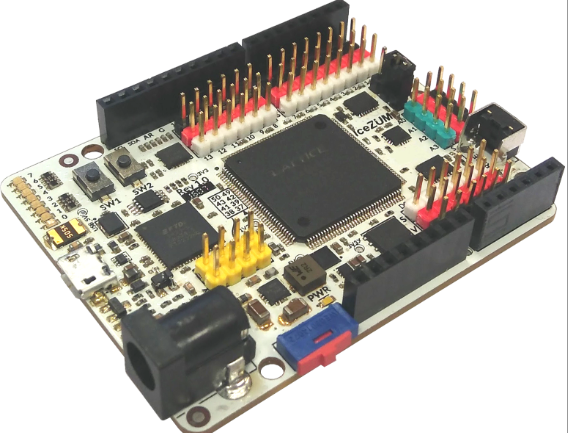

What is an FPGA?

Field-programmable gate arrays (FPGAs) are an entirely different beast that takes GPU computing performance to a whole new level, delivering superior performance in deep neural network (DNN) applications while demonstrating improved power consumption. FPGAs were originally used to connect electronic components together, such as bus controllers or processors, but their application landscape has changed dramatically over time. FPGAs are semiconductor devices that can be electronically programmed to form any type of digital circuit or system. FPGAs offer greater flexibility and rapid prototyping capabilities compared to custom designs. One of the largest FPGA producers is San Jose, Calif.-based Altera, which was acquired by Intel in 2015. They are very different from instruction-based hardware such as GPUs, and the best part is that they can be reconfigured to meet the requirements of more data-intensive workloads such as machine learning applications.

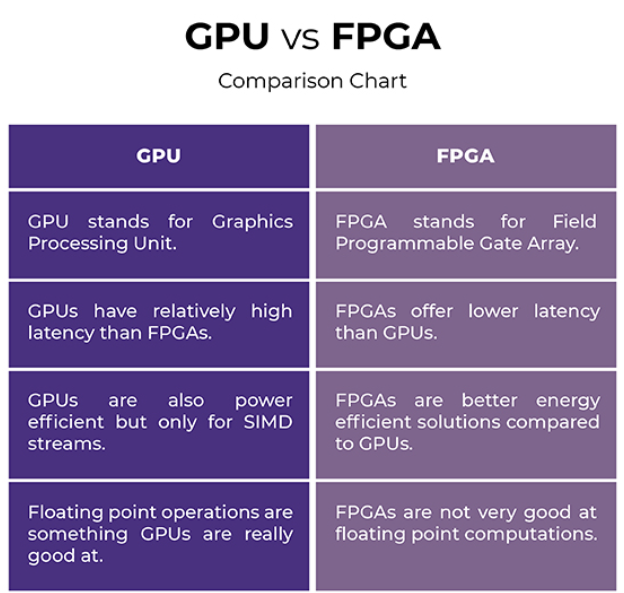

The difference between GPU and FPGA

technology

– A GPU is a specialized electronic circuit originally designed to meet the needs of general scientific and engineering computing to accelerate graphics rendering. GPUs are designed to operate in a Single Instruction Multiple Data (SIMD) fashion. The GPU offloads some of the power-hungry parts of the code by accelerating the performance of applications running on the CPU. An FPGA, on the other hand, is a semiconductor device that can be electronically programmed to become any type of digital circuit or system you want.

lurking

– FPGAs offer lower latency than GPUs, which means they are optimized to process the application as soon as the input is given with minimal latency. The architecture of FPGAs allows them to achieve high computing power without complex design flows, making them ideal for lowest latency applications. They achieve significantly more computing power in less time than GPUs, which relatively need to evolve to stay relevant.

Power Efficiency

– Energy efficiency has been an important performance metric for many years, and FPGAs excel in this area as they are known for their energy efficiency. They can support very high data throughput in terms of parallel processing in circuits implemented in reconfigurable architectures. The best thing about FPGA is that it is reconfigurable, which provides flexibility that gives it an advantage over its GPU counterpart in certain application areas. Many widely used data operations can be efficiently implemented on FPGAs through hardware programmability. GPUs are also energy efficient, but only for SIMD streams.

What is a GPU?

A graphics processing unit (GPU), often called a graphics card or video card, is a graphics processor used to process graphics information for output on a monitor. A GPU is a specialized processor originally designed to meet the needs of accelerating graphics rendering, primarily to improve the graphics performance of games on your computer. In fact, most consumer GPUs strive to achieve superior graphics performance and visual effects for a realistic gaming experience. But today's GPUs are far beyond the personal computers in which they first appeared.

Before the advent of GPUs, it was common knowledge that general purpose computing could only be achieved through CPUs, the first mainstream processing units made for consumer use and advanced computing. GPU computing has grown tremendously over the past few decades and is widely used in research on machine learning, artificial intelligence, and deep learning. GPUs took it to the next level with the introduction of GPU APIs such as Compute Unified Device Architecture (CUDA), which paved the way for the development of deep neural network libraries.

GPU VS FPGA Comparison Chart

Background on FPGA and GPU architecture

The size and complexity of artificial intelligence (AI) models continues to increase at a rate of about 10 times per year, and AI solution providers are under tremendous pressure to reduce time to market, improve performance, and quickly adapt to changing conditions. Model complexity is increasing and AI-optimized hardware is emerging.

For example, in recent years, graphics processing units (GPUs) have integrated AI-optimized algorithm units to improve AI computing throughput. However, as AI algorithms and workloads evolve and grow, they can exhibit properties that make it difficult to fully utilize the available AI compute throughput unless the hardware provides extensive flexibility to accommodate such algorithm changes. Recent papers have shown that many AI workloads struggle to achieve the full computational power reported by GPU vendors. Even for highly parallel computations, such as general matrix multiplication (GEMM), GPUs can only achieve high utilization for matrices of a certain size. So while GPUs theoretically offer high AI computing throughput (often called "peak throughput"), actual performance can be much lower when running AI applications.

FPGAs offer a different hardware approach to AI optimization. Unlike GPUs, FPGAs offer unique fine-grained spatial reconfigurability. This means that we can configure the FPGA resources to perform precise mathematical functions in an extremely accurate sequence to implement the required operations. The output of each function can be routed directly to the input of the function that needs it. This approach enables greater flexibility in adapting specific AI algorithms and application characteristics, thereby improving utilization of available FPGA computing power. Additionally, while FPGAs require hardware expertise to program (via a hardware description language), specially designed soft-core processing units, known as overlay structures, allow FPGAs to be programmed in a processor-like manner. FPGA programming is done entirely through a software tool chain, simplifying any FPGA-specific hardware complexity.

To sum up, there are many differences between FPGA and GPU in terms of architecture, design methods, flexibility, performance, energy consumption and application fields. FPGA is more flexible and reconfigurable, and is suitable for areas that require rapid prototyping and customized applications. The GPU focuses on high-performance parallel computing, especially in graphics rendering, scientific computing and deep learning. Whether it is FPGA or GPU, they all play an important role in accelerating computing and processing and provide effective solutions for applications in different fields.

Well-known manufacturers of FPGA

Xilinx: is the pioneer and largest manufacturer in the FPGA field.

Altera (now part of Intel): Also a major FPGA manufacturer.

Lattice Semiconductor: Mainly produces low-power and miniaturized FPGAs.

Microsemi (now part of Microchip Technology): Produces FPGAs primarily for the aerospace and military markets.

Each of these manufacturers has a different FPGA family to meet different needs from low-end to high-end.

What needs to be added here is that Jinftry has obvious price and channel advantages in the supply of FPGA chips among its peers. Have multiple models in stock at the same time, such as:

XC9536XL-5PCG44C , XC7VX690T-2FFG1157I , XC3SD1800A-4FGG676C , XC7K325T-1FFG676I , XQ7VX690T-2RF1761I , XC6SLX100-3CSG484I , XC7VX690T-2FFG1158C ,

EPM7064STC44-7 ,EPM7032BTI44-5 ,EPM7032BLC44-3 ,EPM7032STC44-7 ,10AS016E3F27E2LG ,10AS022C3U19I2SG , 10AS027H1F35I1SG ,10AS057K2F35E2SG ,

10AS057K3F40E2SG ...etc. series are sold at JINFTRY.COM

edit author:

Jinftry(Hong Kong registered company name: JING FU CAI (HONGKONG) INTERNATIONAL CO., LIMITED) was established in 2013, headquartered in Hong Kong, China, with a branch in Shenzhen, China. It is a global supplier of electronic components and a well-known and competitive electronic product distributor in Asia. Is also an excellent strategic partner of global ODM/OEM/EMS, able to quickly find authentic and traceable electronic components for customers to purchase.